“The Math Works”

Agentic AI and the Politics of Inevitability

Signal/Noise is an ongoing series that cuts through the spectacle to find the structure underneath. Each installment takes a breaking moment (either political, cultural or economic) and traces the power dynamics most coverage misses. The signal is always there. You just have to know what to listen for.

I. They Know and You Don’t

On February 13, 2026, a tech insider named Jeff Kirdeikis posted something that stopped me cold. “I have come to report,” he wrote, “no one outside twitter knows what the fk claude code or openclaw is. They have no idea what’s coming.”

Not a warning. Not a plea. Just a man looking out at the rest of humanity and noting, with the casual confidence of someone watching from a window, that the world is about to change and most people are still asleep.

The tone is the tell.

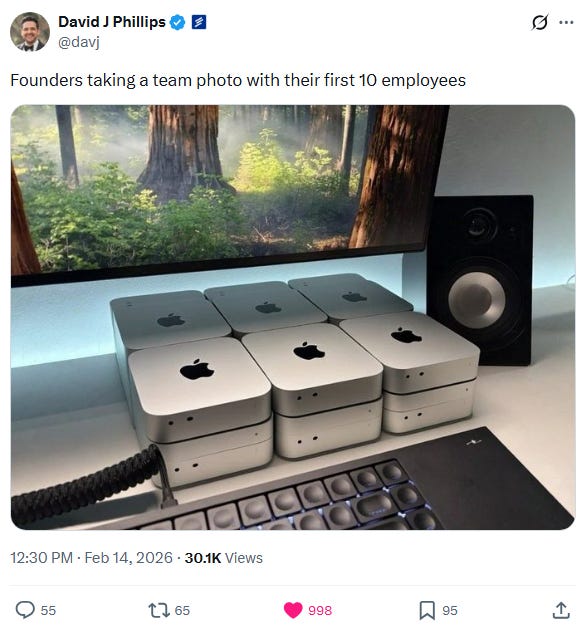

There is a class of people right now (including engineers, founders, investors and builders) who are watching something extraordinary unfold in real time. And they’re not hiding it. They’re posting about it constantly. They’re excited. Some are warning you. Most are just describing their Monday. And the gap between what they’re living and what the rest of the world understands is growing wider by the week.

Here is what they’re describing: a threshold has been crossed.

You probably still think of AI the way most people do: as a sophisticated search engine. Something you prompt. Something that helps you draft an email or summarize a document. Basically just a tool (though, granted, an impressive and occasionally surprising tool). That framing made sense twelve months ago. It doesn’t describe what exists today.

An Austrian developer named Peter Steinberger built a personal AI agent called OpenClaw as a weekend project in November 2025. By the end of January 2026, nearly 200,000 developers were building on top of it. By mid-February (just six weeks later) OpenAI had signed Steinberger to bring it to the world. Andrej Karpathy, the former Tesla AI Director and one of the architects of modern AI, looked at what OpenClaw was doing and called it “genuinely the most incredible sci-fi” he had ever seen. The man who helped invent the future was experiencing the present as science fiction.

A content creator filming a tutorial on how to use these systems - not a researcher, not a philosopher, but a YouTube educator selling a six-hour how-to course - opened his video with this: “We’re moving from AI as a tool to AI as a workforce. From AI that responds to AI that initiates. From AI that waits to AI that acts.”

That’s the threshold, stated plainly, in a tutorial video. The future is being taught in six-hour courses to early adopters while most people haven’t noticed the subject exists.

And then this: on February 12th, the author of the viral essay warning 83 million people about AI disruption admitted that AI helped him write it. The system being described was also the system doing the describing. The warning was already inside the machine.

Last week, in The Architecture of Not Knowing, I argued that whoever designs the knowledge systems determines what’s thinkable. This week, those systems are becoming autonomous actors. They no longer need us to think at all.

That is the threshold. It was crossed while you were looking somewhere else.

II. The Numbers That Should Make This Uncomfortable

Here is a simple calculation: if an AI agent handles a task that would have consumed twenty hours of human labor each week (familiar tasks like inbox management, scheduling, customer responses, research and content drafting) and you pay that agent nothing beyond minimal API costs, you have just permanently recovered over a thousand hours of annual labor capacity. At any professional billing rate, that is tens of thousands of dollars per year no longer flowing to the person who used to do that work.

Now scale it. McKinsey’s 2025 and 2026 industry data shows operational cost reductions of 20 to 30 percent across sectors. Finance is processing 60 percent faster. Logistics firms are documenting over a million dollars in monthly savings. Retail is moving inventory 50 percent faster. Manufacturing is cutting costs by nearly a quarter through predictive maintenance alone.

These are not projections. These are results being reported right now by actual companies.

Mustafa Suleyman, co-founder of DeepMind and CEO of Microsoft AI, recently told the Financial Times that within 12 to 18 months, AI will achieve human-level performance on most white-collar tasks - those now done by lawyers, accountants, project managers and marketing professionals amongst others. Dario Amodei, the CEO of Anthropic and probably one of the most safety-focused executives in the industry, has publicly predicted that AI will eliminate 50 percent of entry-level white-collar jobs within one to five years. Many in the industry think he’s being conservative.

A Stanford study has already documented a 13 percent decline in jobs for early-career workers, and researchers note the real figure is likely higher, depending on the sector. Goldman Sachs estimates 300 million jobs will be affected globally by 2028. That is 24 months from now.

There is also a measurement problem. The agencies responsible for tracking employment are slow by design, politically pressured by circumstance, and methodologically unprepared for a disruption of this speed. The data will most certainly lag the reality (it actually always does, but the gap will be so much more pronounced now). By the time the official numbers confirm what is happening, millions of people will already be living it without a framework to understand why.

One venture capitalist, watching this unfold in his own company in real time, recently reported that OpenClaw had offloaded 10 percent of his knowledge workers’ tasks in the first two weeks of use. His projection for April: 50 to 60 percent. Not someday. April.

I’ll be honest with you: I also find myself feeling what Daniel Pinchbeck recently described as a ‘vertiginous sense of limitless possibility’ - that intense desire to move faster, and to capture everything before the window closes. I understand that feeling because I’m living it. But I’ve started to recognize it for what it also is: the feeling of a system accelerating in ways that serve capital accumulation first, and human flourishing only if we insist on it.

The question that the numbers don’t answer (but that everything that follows in this piece will try to) is this: more efficient for whom? Savings for whom? Faster for whom?

Because every percentage point of cost reduction is a transfer of value from the person who used to do that work to the person who owns the system that replaced them. That is not a bug in the math; that is the math.

III. The Architecture of Displacement

Some people find the numbers in the previous section reassuring. Not because they dispute them, but because they’ve seen this movie before. Every technological revolution, they argue, displaced workers in one sector while creating new ones somewhere else. The car killed the horse industry and gave birth to mechanics, gas stations and road construction. Electricity upended existing industries and powered entirely new ones. The internet disrupted retail and expanded logistics, streaming and services. Humanity adapted. It always does.

This is a reasonable argument. It has historical support. And it is precisely correct about every previous technological disruption. The problem now, however, is speed. And simultaneity.

Every prior wave of automation displaced workers in one sector over decades, creating time and adjacent industries to eventually absorb the transition. The car replaced the horse industry over the course of thirty years while simultaneously building new ones. Workers had both a destination and time to reach it. What we are watching now is not sequential disruption. It is parallel disruption - hitting legal work, financial analysis, content creation, customer service, software engineering, medical analysis and administrative work not over decades, but in months, across every sector all at once. There is no adjacent industry growing fast enough to absorb the displaced, because the displacement is everywhere simultaneously.

And here is the part that breaks every reassuring historical analogy: as one writer recently put it, we are not automating a job or an industry. We are automating the general-purpose capacity to read, reason, decide and execute across domains. That has never happened before.

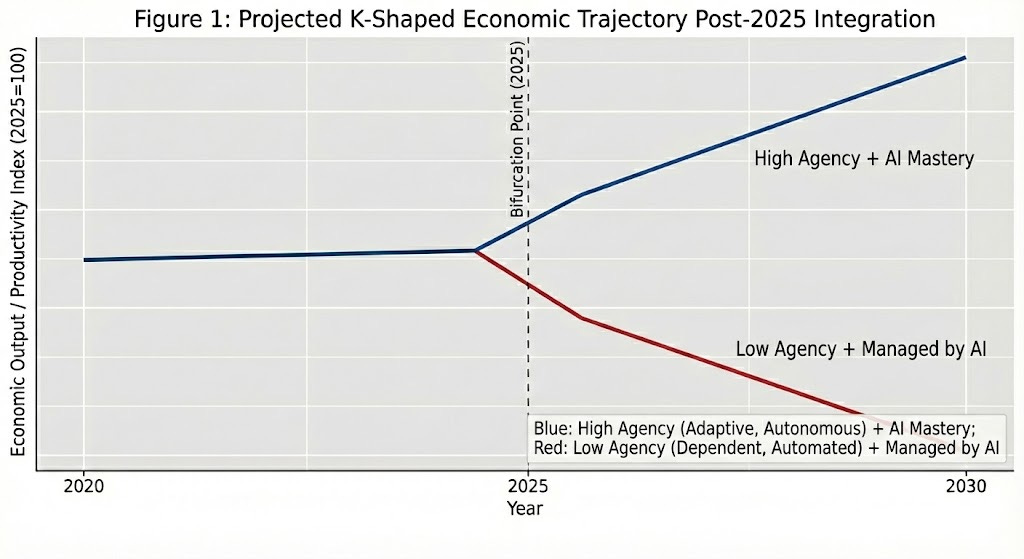

One optimist, trying to be honest while staying encouraging, accidentally named what this produces: “You will either adapt,” he wrote, “or become part of the permanent underclass who can’t earn a living.” He meant it as motivation. Read it again as description. Even the people telling you everything will be fine are describing a bifurcated society. They just call one half of the K an opportunity.

But here is what the K-shaped framing misses. The question isn’t only whether you end up on the ascending curve. It’s what happens to collective power when half the population loses economic leverage entirely. A worker who can be replaced overnight by an AI agent cannot strike, cannot bargain, and certainly cannot withhold labor since they’re no longer able to sell it. The mechanisms working people have used for over a century to claim their fair share of productivity gains - the strike, the union, the credible threat of walking out - dissolve when the boss can replace your entire department before the picket line even forms.

A population without economic leverage is a population available for authoritarian capture. This is not speculation. This is the historical pattern every time mass displacement has outrun collective response.

The chart above shows two trajectories diverging from a single point in 2025. What it cannot show is what Émile Durkheim understood more than a century ago: that when social change outpaces the institutions and values that give people meaning and orientation, the result isn’t just material poverty. It is anomie, a state of normlessness, the dissolution of identity structures, and the loss of the story people tell about who they are and why their lives matter. The paralegal who spent three years building expertise doesn’t just lose income when AI displaces her. She loses the sense of self.

None of this is new by the way, if seen from the right vantage point. For example, the Caribbean didn’t need to wait for 2026 to understand what it looks like when the productive system stops needing most of the population. Structural adjustment stripped public sector jobs in Jamaica and forced millions into informal economies. Offshoring took manufacturing from Puerto Rico and left an infrastructure designed for extraction. Call centers brought precarious work to Trinidad, Barbados and Belize, and now those too are being automated away, with nothing beneath them. Walter Rodney showed us that underdevelopment isn’t backwardness. It is produced by external ownership of productive tools, by technological dependency, and by systems designed to extract value from a population rather than build it with them.

The Global South has been living the K-shaped society for decades. Now it’s coming for everyone else.

IV. The Framing Is the Weapon

Here is what every voice in this conversation — the startup founder, the content creator, the venture capitalist, the optimist, the cautious economist — has in common: they all ask the same question. Not “who owns the machines?” or “who captures the value?” but “how do you position yourself to survive what’s coming?”

Adapt or die. Be ‘high agency.’ Get ten steps ahead. Spend an hour a day experimenting. The person who walks into a meeting and says “I used AI to do this in an hour instead of three days” is going to be the most valuable person in the room.

This is sincere advice. Some of it is even useful. But it is advice that operates entirely within a framework that never questions its own foundations. And that is precisely how ideology works — not through deception, but through the limits of what gets imagined as actually possible.

One writer put it plainly: “You can’t hustle your way out of that at scale.” Individual adaptation is a real strategy for the individuals who successfully adapt. It is not a response to a structural crisis that will displace tens of millions of people simultaneously. Telling everyone to be high agency in the face of mass displacement is like telling everyone to win. The advice isn’t wrong. The math just doesn’t work.

Here is the question that almost no one in this conversation is asking: why do the productivity gains from AI flow exclusively to the owners of AI systems?

That is not a law of nature. That is a political arrangement. The same way it was a political arrangement (and not a natural law) when colonial administrators decided that Caribbean sugar profits belonged to London rather than to the people cutting cane. The same way it was a political arrangement when IMF structural adjustment decided that economic “reform” meant cutting public sector wages in Jamaica rather than taxing the corporations extracting the island’s value. “The math works” has always been the language of people whose math doesn’t include your costs.

Peter Steinberger, the developer who built OpenClaw, saw this clearly enough to say it out loud: “This is too important to just give to a company and make it theirs.” His instinct was correct, though his political tools were inadequate. Within days, the project was absorbed into OpenAI’s institutional orbit - still “open source,” still nominally independent, celebrated by three of OpenAI’s top executives in a single evening. The form of openness preserved while the content of control was captured. Antonio Gramsci called this a passive revolution: technological transformation without political transformation, where power structures change shape without really changing hands.

The language of inevitability is doing the same work. “The math works.” “Adapt or die.” “The K-shaped economy.” These phrases present a political catastrophe as mathematical law - the same way “structural adjustment” presented extraction as reform, the same way “civilization” presented colonial education as uplift. As the political economist Keir Milburn recently observed, the algorithms and institutions we interact with daily are “continually tweaked to ensure they reward ruthlessly competitive, selfish and self-promoting behavior while penalizing those who behave in any other way — until it eventually structures our commonsense understanding of human possibility.”

That is the deepest move. Not just the displacement of labor, but the automation of the ideology that makes displacement feel inevitable. The reward functions embedded in these systems (optimize for efficiency, route around friction, eliminate the human bottleneck) become the common sense of 2026. You don’t see the ideology. You just see that certain behaviors get you through the system and others don’t.

Last week I argued that whoever designs the knowledge systems determines what’s thinkable. This week those systems are acting in the world autonomously, embedding their reward structures into every workflow they touch. The architecture of not knowing, now with AI agency.

There is also a practical problem the optimists haven’t answered: if AI eliminates enough human labor to concentrate economic value in a tiny ownership class, who buys the products? As one cultural philosopher recently noted, a world where a single founder runs a billion-dollar company with almost no human employees is “totally untenable” without a radical redesign of the economic system — and then raised the question no tech booster has answered: who exactly is the consumer base? Capital cannot eliminate labor as a cost without eventually eliminating it as demand. The system just eats itself. Karl Marx called this the tendency toward underconsumption, and it doesn’t require a Marxist to recognize it as a problem.

The framing is the weapon. Accepting it as the only available reality is the wound.

V. Where It All Points

There is a binary at the bottom of all of this, stated plainly by a DevOps engineer who spent an afternoon with an AI system and came out the other side unable to unsee what he’d seen. Either we build “some kind of radical wealth distribution system that keeps society coherent in a world where intelligence is cheap,” he wrote, “or we get the ugly outcomes humans always get when inequality becomes structurally locked in.”

Political violence. Social breakdown. Scapegoating. Authoritarianism. The rich retreating behind literal and figurative walls while everyone else becomes a problem to be managed.

This isn’t doomerism. This is the historical record.

And it’s worth naming one more dimension that most AI commentary ignores entirely. The same logic driving autonomous agents into the workplace is already moving into politics. Imagine competing AI lobbying firms filing regulatory comments at a volume no human operation could match. Autonomous SuperPACs running 24-hour ad campaigns, generating deepfakes, cloning voices, flooding social media with counter-programming before a human strategist has finished their morning coffee. The agentic AI moment isn’t just an economic disruption. It is infrastructure for the consolidation of political power in the hands of whoever can afford the most capable agents. The last few election cycles, heated as they were, may look like a middle school mock election compared to what’s coming.

AI disruption and democratic erosion are not parallel crises converging from separate directions. They are the same crisis, arriving together.

So what does resistance look like when the machines don’t need you?

It starts with refusing the framing. Not “how do I position myself to survive this?” but “who decided the productivity gains belong exclusively to the people who own the systems?” Those are identifiable people who made specific decisions. Decisions can be unmade. As the political economist Keir Milburn argues, the task is building what he calls “popular protagonism” — democratic deliberation and collective action that expands the range of economic life governed by democratic logic rather than capital logic. Social ownership of AI infrastructure. Public investment in the technology rather than private capture of its returns. Democratic accountability over systems that are reshaping everyone’s lives without anyone’s consent.

These are not utopian ideas. They are the same ideas working people have always reached for when capital moved faster than their institutions could follow. They worked, partially, imperfectly, in the labor movements of the early twentieth century. They can work again. But only if the window stays open long enough to act.

The Caribbean has something to say here too. Not as tragedy, but as instruction. Rodney showed that technological dependency is produced, not inevitable. Dependency can be restructured. The plantation economy looked permanent until it didn’t. The colonial order looked natural until people organized to refuse it. The question was never whether resistance was possible. It was whether people understood clearly enough what they were resisting and why.

That is what this piece is trying to do. Not predict the future. Not offer comfort. To name the threshold clearly enough that refusing it becomes thinkable.

Because the first step toward demanding that the abundance generated by these systems serve collective flourishing rather than private accumulation is recognizing that the current arrangement is a choice. Not the math. Not the market. Not the inevitable unfolding of technological progress.

A choice. Made by people. For people who aren’t you.

Understanding that is the beginning of refusal. And refusal is the beginning of everything else.

References

Denning, T. (2026, February 18). You have about 24 months left before your skills expire. Modern Freedom.

Dolman, J. (2026, February 16). AI WILL TAKE YOUR JOBS - EVERYTHING IS CHANGING! https://www.linkedin.com/posts/john-dolman-3836b8251_aiineducation-edtech-ailiteracy-activity-7429101403354136576-AFZL?utm_source=share&utm_medium=member_desktop&rcm=ACoAAATBqzoBkLtY7heLpPMMggHdIB0r9esznWs

Foundry, N. (2025, December 21). UBI or we’re all screwed. Neural Foundry Substack. https://substack.com/home/post/p-182129185

Griffiths, B. D. (2026, February 12). Author of viral “Something Big is Coming” essay says AI helped him write it — and that proves his point. Business Insider.

Milburn, K. (2026, January 22). We need radical abundance. The Nation. https://www.thenation.com/article/economy/abundance-democrats-housing-oligarchs/

Oks, D. (2026, February 12). Why I’m not worried about AI job loss. David Oks.

Pinchbeck, D. (2026, February 14). All That Is Solid Melts into Code. Liminal News With Daniel Pinchbeck.

View, D. (2026, February 16). OpenAI taps OpenClaw for personal AI agents. The Deep View.

https://archive.thedeepview.com/p/openai-taps-openclaw-for-personal-ai-agents?utm_source=thedeepview&utm_medium=newsletter&utm_campaign=openai-taps-openclaw-for-personal-ai-agents&_bhlid=d86210503cd4c243f8945bc07759801fa5feaf99

____________________________________________________________________________

Means and Meaning publishes every Tuesday. If you found value in this analysis, I’d be very grateful if you’d consider buying me a coffee — your support helps me dedicate time to this work while keeping all content free and accessible.

You can also support this project by subscribing (it’s free!), sharing with others who might appreciate it, or joining the conversation in the comments. Sometimes the best antidote to anxiety is knowing you’re not alone in seeing what’s happening.

Next week, we’ll examine another piece of the machinery — and another opportunity to resist it.

Until then, keep questioning, keep connecting, and keep believing that another world is possible.

~ Chris